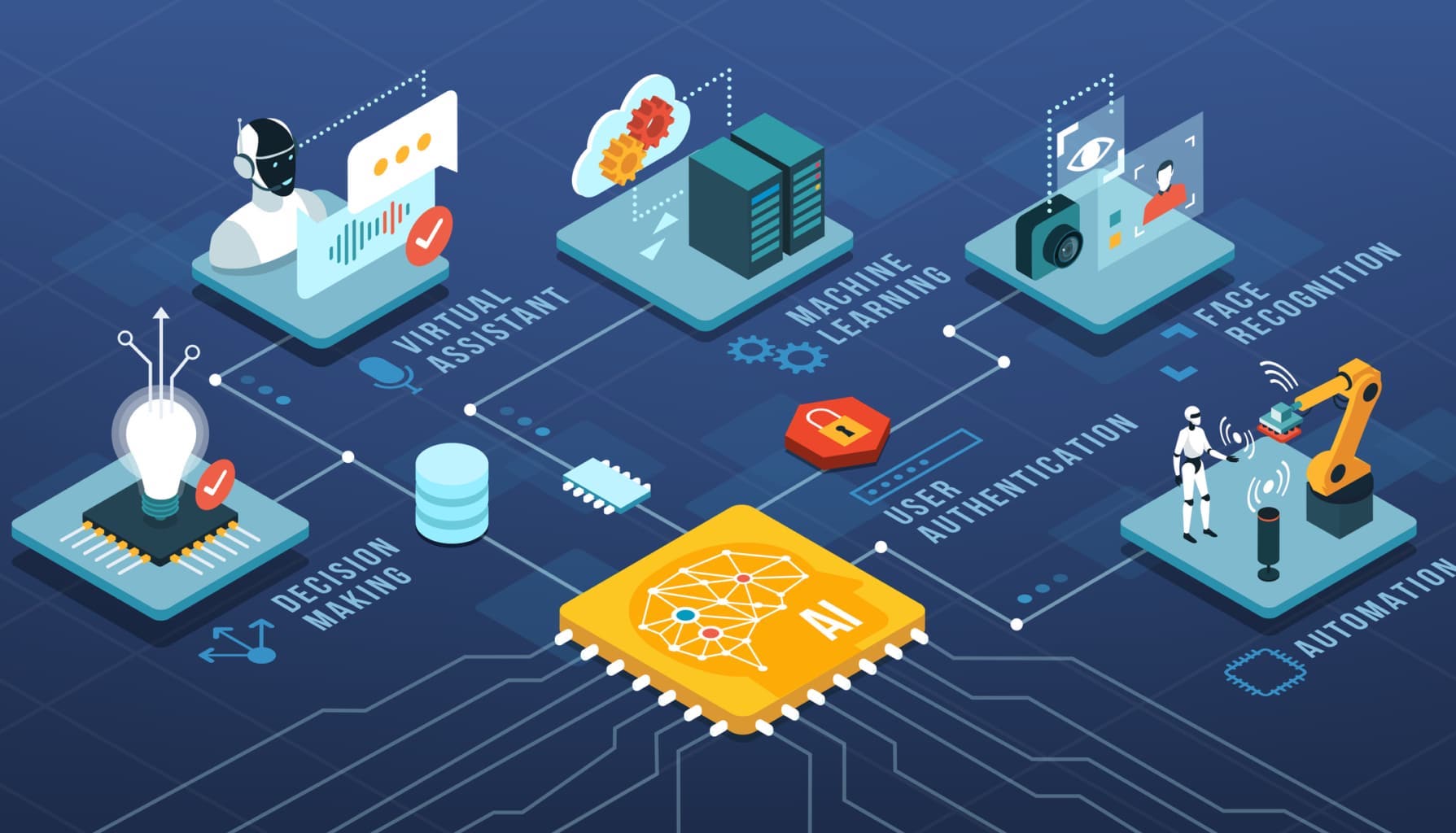

IT Researches Ltd is an information technology company & International computer research centre offering a wide variety of ‘AI Powered™’ services and solutions. IT Researches has extensive experience in delivering cutting edge technology solutions to Enterprise and mid-sized Businesses. We have deep understanding of service delivery and have the specialist skills in-house required for the most complex integration projects, We are a leading independent provider for the selection, deployment, advancement and ongoing management of Artificial Intelligence (AI) solutions.

Why us?

Exprience

Years of experience serving global clients.

We are ready to serve you.

Simplicity

We deliver simple solutions that work!

Quality

Dedicated quality assurance team to further accentuate quality norms.

Customer Service

World class customer service provide your all trusted and fast service to support.

Cost Effective

Geographical advantage bestows cost advantage that we pass on to our clients.

Partners

To ensure that we can consistently deliver the finest technology solutions available,

we have developed a number of strategic partnerships and alliances.

The team at IT Researches constitutes some elite, diligent and proficient members, who have vast global experience and proven track records. Our company is laced with an army of brilliant full time IT professionals to take every project as a challenge and endeavor hard to make it a success.

We have a resource of the finest IT professionals, who produce superior software to suit the client’s requirement and help them to meet their goals. Our culture boasts of passionate, innovative and meticulous professionals, who share the same vision as ours to help various businesses, institutions and corporate sectors. Our management staff is characterized by a uniquely strong combination of knowledge and experience.

We employ subject-matter experts who have made AI their subject of academic study. Being able to strip away the marketing gloss to provide real interpretations of the capability of vendor technology means our clients can be assured that the solution we deploy will deliver the operational and financial benefits envisaged at the planning stages. Our approach is that an AI solution is developed to meet the stated objectives of the business (not the other way around), this way results and expectations can be measured effectively.

IT Researches is a high-spirited company enterprising to offer distinctive IT services and solutions to its global clients. As a client centred and quality conscious IT company, we offer a wide spectrum of IT services and solutions to help our clients meeting their business needs on time and within cost-effective parameters.

We are passionate about delivering outstanding software products and solutions with guaranteed cost savings. We focus our efforts and investments to build the right expertise, infrastructure and talent to continuously improve services and processes

Operating in both, the private and public sector, We understand our clients’ business requirements and provide software solutions utilizing high quality people with relevant expertise, working either on site or remotely as part of the clients team, whilst also taking advantage of the very latest high definition video conferencing technology to enhance project communication, which is provided free of charge as part of our service.

In media